import pandas as pd

import requests

import pickle

import os.path

import json

import time

from tqdm import tqdm

import numpy as np

import seaborn as sns

from pathlib import Path

# import plotly.express as px

adds_df_path = Path("adds_df.parquet")

if not adds_df_path.exists():

# Pulled a list of addresses for my community from county tax website

cols_of_interest = [

"prop_id",

"hood_cd",

"market",

"school",

"city",

"county",

"legal_desc",

"tract_or_lot",

"abs_subdv_cd",

"situs_num",

"situs_street",

"situs_street_sufix",

"situs_city",

"situs_state",

"situs_zip",

"addr_line1",

"addr_line2",

"addr_line3",

"addr_city",

"addr_state",

"zip",

]

adds_df = pd.read_excel("address_list.xlsx")

adds_df = adds_df[cols_of_interest]

# Clean up all of the columns that contain property address data

# ['situs_num', 'situs_street', 'situs_street_sufix', 'situs_city', 'situs_state', 'situs_zip']

adds_df["situs_num"] = adds_df["situs_num"].fillna(0)

adds_df["situs_street"] = adds_df["situs_street"].fillna("")

adds_df["situs_street_sufix"] = adds_df["situs_street_sufix"].fillna("")

adds_df["situs_city"] = adds_df["situs_city"].fillna("Pearland")

adds_df["situs_state"] = adds_df["situs_state"].fillna("TX")

adds_df["situs_zip"] = adds_df["situs_zip"].fillna("77584")

adds_df["situs_num"] = adds_df["situs_num"].apply(lambda x: str(int(x)))

adds_df["situs_street"] = adds_df["situs_street"].apply(lambda x: str(x).strip())

adds_df["situs_street_sufix"] = adds_df["situs_street_sufix"].apply(

lambda x: str(x).strip()

)

adds_df["situs_city"] = adds_df["situs_city"].apply(lambda x: str(x).strip())

adds_df["situs_state"] = adds_df["situs_state"].apply(lambda x: str(x).strip())

adds_df["situs_zip"] = adds_df["situs_zip"].apply(lambda x: str(int(x)))

# Create new columns to construct full address which will be used in Bing Maps searches

adds_df.insert(0, "house_address_partial", "", allow_duplicates=False)

adds_df.insert(0, "house_address", "", allow_duplicates=False)

# populate new columns for creating full address

adds_df["house_address_partial"] = (

adds_df["situs_num"]

+ " "

+ adds_df["situs_street"]

+ " "

+ adds_df["situs_street_sufix"]

)

adds_df["house_address"] = (

adds_df["house_address_partial"]

+ ", "

+ adds_df["situs_city"]

+ ", "

+ adds_df["situs_state"]

+ " "

+ adds_df["situs_zip"]

)

adds_df["prop_id"] = adds_df["prop_id"].apply(lambda x: int(x))

adds_df = adds_df.set_index("prop_id")

# testPropid = adds_df.index[0]

# testAddress = adds_df["house_address"].iloc[0]

# print([testPropid, testAddress])

with open("secrets.pickle", "rb") as f:

secrets = pickle.load(f)

# Route payloads contains details for 4 different navigational routes:

# 1) morning Northbound (AM-N) and 2) its afternoon counterpart (PM-N)

# 3) morning Southbound (AM-S) and 4) its afternoon counterpart (PM-S)

# I had to make afternoon times as arrival or else api didn't successfully respond

route_payloads = {

"AM-N": {

"wp.1": "29.595583, -95.386315",

"dateTime": "11/08/2023 08:00:00",

"timeType": "Departure",

},

"PM-N": {

"wp.0": "29.594770, -95.386830",

"dateTime": "11/08/2023 17:30:00",

"timeType": "Arrival",

},

"AM-S": {

"wp.1": "29.549921, -95.387785",

"dateTime": "11/08/2023 08:00:00",

"timeType": "Departure",

},

"PM-S": {

"wp.0": "29.543948, -95.387128",

"dateTime": "11/08/2023 17:30:00",

"timeType": "Arrival",

},

}

def bing_maps_query(house_address, route_payload):

my_api_key = secrets["api_key"]

bing_map_url = r"http://dev.virtualearth.net/REST/V1/Routes/Driving"

payload = {

"avoid": "tolls",

"key": my_api_key,

"distanceUnit": "mi",

"travelMode": "Driving",

"optimize": "timeWithTraffic",

}

payload = payload | route_payload

if "wp.0" in payload.keys():

payload["wp.1"] = house_address

else:

payload["wp.0"] = house_address

r = requests.get(

"http://dev.virtualearth.net/REST/V1/Routes/Driving", params=payload

)

return r

def format_response_filepath(propid, descriptor):

subfolder = "query_responses"

response_filename = str(propid) + "|" + descriptor + ".pickle"

response_path = Path(__file__).parent / subfolder / response_filename

return response_path

# get routes for all addresses

propid_subset = list(adds_df.index)

# add columns for route drive distances and times

new_col_initialVals = {

"travelDistance": 0.0,

"distanceUnit": "",

"travelDuration": 0,

"travelDurationTraffic": 0,

"durationUnit": "",

}

new_columns = {

"|".join([route, col]): new_col_initialVals[col]

for col in new_col_initialVals

for route in route_payloads

}

for col in new_columns:

adds_df.insert(1, col, new_columns[col], allow_duplicates=False)

# add columns for address latitude/longitude

adds_df.insert(1, "lon", 0.0, allow_duplicates=False)

adds_df.insert(1, "lat", 0.0, allow_duplicates=False)

# loop through property ID / addresses.

# Check if we've already saved the route info, otherwise query maps API and save the response

for propid in tqdm(propid_subset):

prop_address = adds_df.loc[propid]["house_address"]

for route in route_payloads:

prop_route_filepath = format_response_filepath(propid, route)

if prop_route_filepath.exists():

with open(prop_route_filepath, "rb") as f:

r = pickle.load(f)

else:

r = bing_maps_query(prop_address, route_payloads[route])

with open(prop_route_filepath, "wb") as f:

pickle.dump(r, f)

# Extract some data from good responses

if r.json()["statusCode"] == 200:

route_data = r.json()["resourceSets"][0]["resources"][0]

result_keys = {

"travelDistance": float,

"distanceUnit": str,

"travelDuration": int,

"travelDurationTraffic": int,

"durationUnit": str,

}

# get results of interest, cast into proper data types, insert into df

results = {

key: result_keys[key](route_data[key]) for key in result_keys

}

new_columns = ["|".join([route, key]) for key in result_keys]

adds_df.loc[propid, new_columns] = [results[key] for key in results]

# get latitude/longitude

if route.startswith("AM"):

adds_df.loc[propid, ["lat", "lon"]] = route_data["routeLegs"][0][

"startLocation"

]["point"]["coordinates"]

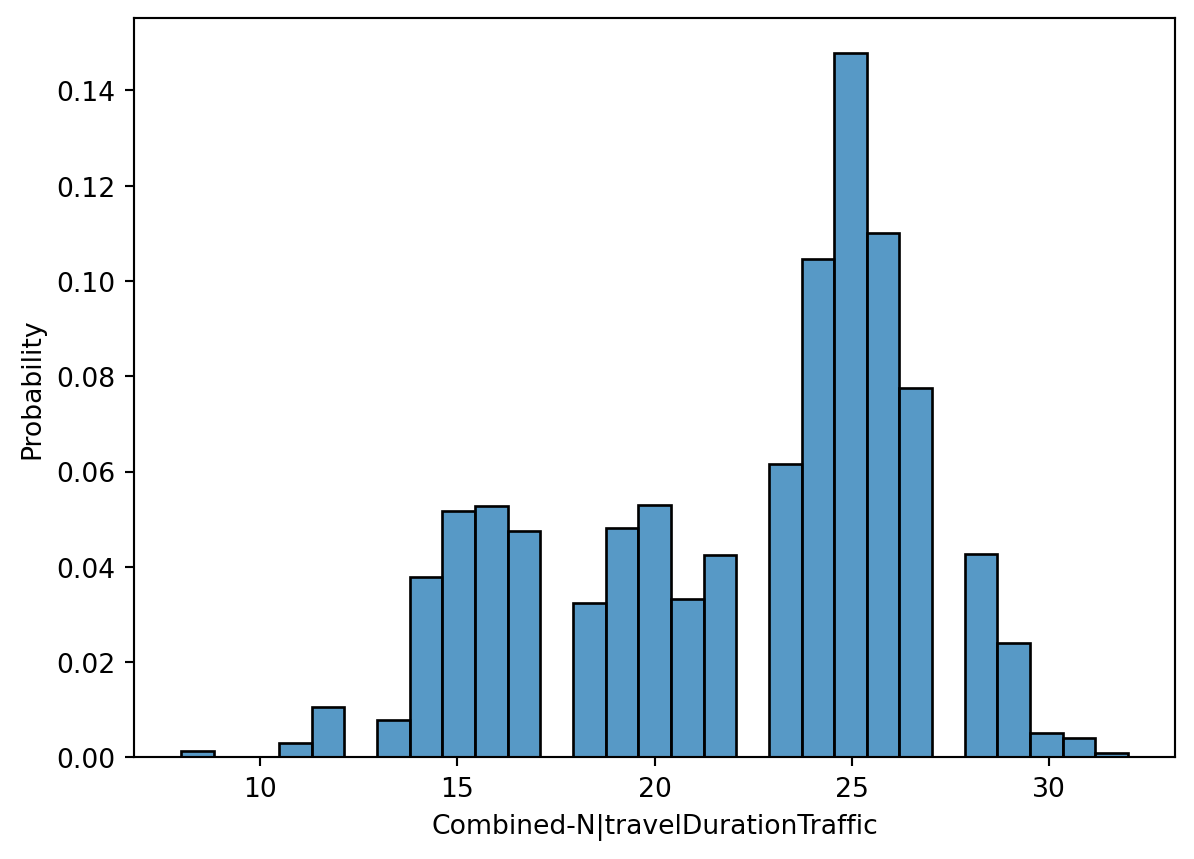

adds_df["Combined-N|travelDurationTraffic"] = np.round(

(adds_df["AM-N|travelDurationTraffic"] + adds_df["PM-N|travelDurationTraffic"])

/ 60

)

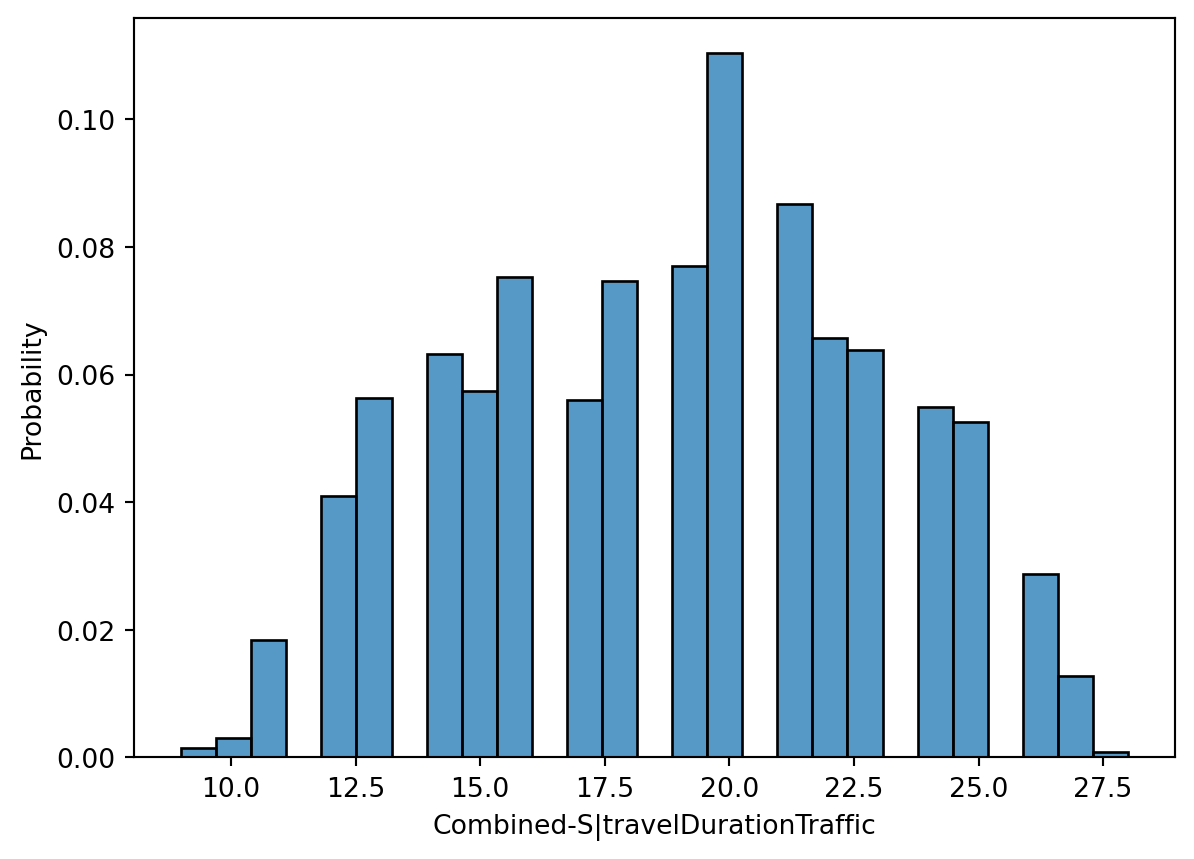

adds_df["Combined-S|travelDurationTraffic"] = np.round(

(adds_df["AM-S|travelDurationTraffic"] + adds_df["PM-S|travelDurationTraffic"])

/ 60

)

adds_df = adds_df.astype({"tract_or_lot": str})

adds_df.to_parquet(adds_df_path)

else:

adds_df = pd.read_parquet(adds_df_path)